|

|

Breaking news

[Back to archive]

The latest results achieved by the project consortium.

Reaching Activity in the Medial Posterior Parietal Cortex of Monkeys Is Modulated by Visual Feedback

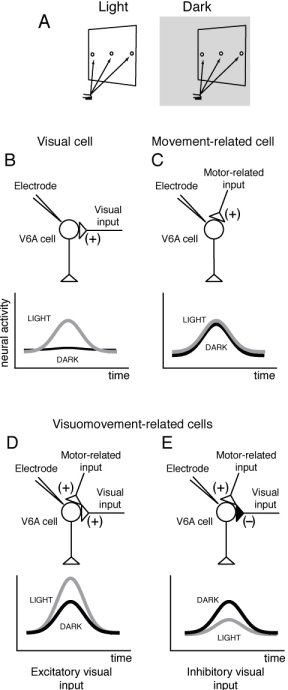

A) Experimental setup. Reaching movements were performed in the light and in the dark (gray shaded) background, from a home button (black rectangle) toward one of three targets located on a panel in front of the animal. B-E) Different weight and sign of visual feedback inputs on V6A reaching cells. Schematic representation of the inputs impinging upon V6A cells (top) and of their neural activity (bottom).

Reaching and grasping an object is an action that we perform succesfully in light and in dark. In the dark, reaching movements rely on efferent copies of motor signals and proprioceptive reafferent signals from the moving limb. However, reaching in the light relies not only on efferent motor and reafferent proprioceptive signals but also on visual information about the target to be reached, the moving forelimb, and the surroundings (Carlton, 1981; Prablanc et al., 1986). In the absence of visual feedback, movement performance quickly deteriorates (Woodworth, 1899; Vince, 1948; Keele and Posner, 1968; Carlton, 1981; Meyer et al., 1988; Ma-Wyatt and McKee, 2007).

In the past, we demonstrated that reaching movements performed in dark modulate the activity of neurons in medial posterior parietal area V6A (Fattori et al., 2001), and that V6A neurons are sensitive to arm position and to arm movement direction (Breveglieri et al., 2002; Fattori et al., 2005). In the present work we tested whether the reach-related activity and its spatial tuning are influenced by the presence of visual feedback (Bosco et al 2010). To answer this question, animals were trained in an instructed-delay task to reach visual targets at various locations in the light and in the dark. Reaching activity in the light condition may reflect a copy of efferent commands, as well as proprioceptive and visual afferent feedback, whereas in the dark condition reaching activity may reflect only somatosensory and/or movement-related input.

The task performed by the animal is summarized in figure 1A.

A trial begins when the monkey presses the button near its chest. After button pressing, the animal is free to look around and is not required to perform any eye or arm movements. After 2001000 ms, one of the LEDs lights up (with green illumination). The monkey has to fixate the LED and wait for its change in color without performing any eye or arm movement. After a delay period of 5002500 ms the LED color changes from green to red. This event represents the go signal for the monkey to release the button and to perform an arm-reaching movement to reach the LED and press it. The animal then keeps the hand on the LED until it switches off (after 5001200 ms). This event cues the animal to release the LED and to return to the home button. The time sequence was the same in trials performed in dark and in light. In light, the animal saw the panel, and therefore the reaching targets, and its arm moving in the peripersonal space.

We found that about 85% of V6A neurons (127/149) were significantly related to the task in at least one of the two conditions. The majority of task-related cells (69%) showed reach-related activity in both visual conditions, some were modulated only in light (15%), while others only in dark (16%). The sight of the moving arm often changed dramatically the cell's response to arm movements. In some cases the reaching activity was enhanced and in others it was reduced or disappeared altogether. In fact, we encountered three types of neurons (shown in figure 1 B-E). Movement-related neurons (figure 1C) displayed an increase in activity for reaching in the dark, and their activity was unchanged when reaching in the light. Visual-related neurons (figure 1B) responded only to reaching in the light. Visuomovement neurons responded to reaching in the dark, and their responses was either enhanced (figure 1D) or reduced during reaching in the light (figure 1E).

The present data support the view that V6A cells monitor the ongoing activity taking into account visual information as well as somato-sensory/-motor information.

The vision of the moving arm modulates reaching activity of V6A neurons in a complex way, increasing or decreasing it according to the type of cell taken into account. We suggest that the contribution of the different cell types found in V6A to the control of reaching movements depends on the availability of sensory information. Some V6A cells may monitor the arm movement on the basis of somato-sensory/-motor inputs, because they do not receive visual input, or their visual inputs are canceled out. Other V6A cells may use the visual information to monitor the ongoing arm movement, as well as hand/object interaction. These data provide empirical support for computational models suggesting task-dependent reweighting of sensory signals dictated by the information content of the visual feedback when the action occurs (Sober and Sabes, 2005; McGuire and Sabes, 2009) and can inspire future researches in artifical illigent systems able to act in space in conditions of different sensory inputs.

References

Bosco A, Breveglieri R, Chinellato E, Galletti C, Fattori P. (2010) Reaching activity in the medial posterior parietal cortex of monkeys is modulated by visual feedback. J Neurosci. 2010 Nov 3;30(44):14773-85.

Breveglieri R, Kutz DF, Fattori P, Gamberini M, Galletti C (2002) Somatosensory cells in the parieto-occipital area V6A of the macaque. Neuroreport 13:2113-2116.

Carlton LG (1981) Visual information: the control of aiming movements. Q J Exp Psychol A 33:87-93.

Fattori P, Gamberini M, Kutz DF, Galletti C (2001) Arm-reaching neurons in the parietal area V6A of the macaque monkey. Eur J Neurosci 13:2309-2313

Fattori P, Kutz DF, Breveglieri R, Marzocchi N, Galletti C (2005) Spatial tuning of reaching activity in the medial parieto-occipital cortex (area V6A) of macaque monkey. Eur J Neurosci 22:956-972.

Keele SW, Posner MI (1968) Processing of visual feedback in rapid movements. J Exp Psychol 77:155-158.

Ma-Wyatt A, McKee SP(2007) Visual information throughout a reach determines endpoint precision. Exp Brain Res 179:55-64.

McGuire LM, Sabes PN (2009) Sensory transformations and the use of multiple reference frames for reach planning. Nat Neurosci 12:1056-1061.

Meyer DE, Abrams RA, Kornblum S, Wright CE, Smith JE (1988) Optimality in human motor performance: ideal control of rapid aimed movements. Psychol Rev 95:340-370.

Prablanc C, Pélisson D, Goodale MA (1986) Visual control of reaching movements without vision of the limb. I. Role of retinal feedback of target position in guiding the hand. Exp Brain Res 62:293-302

Sober SJ, Sabes PN (2005) Flexible strategies for sensory integration during motor planning. Nat Neurosci 8:490-497.

Vince MA (1948) Corrective movements in a pursuit task. Q J Exp Physiol Cogn Med Sc 1:85, 103.

Woodworth RS (1899) The accuracy of voluntary movement. Psychol Rev Monogr 3:1-106.

Annalisa Bosco(1), Rossella Breveglieri(1), Eris Chinellato(2),

Claudio Galletti(1), Patrizia Fattori(1),

University of Bologna (1)

Department of Psychology

Universitat Jaume I de Castellon (2)

Department of Engineering and Computer Science

|

|

|

|

WEBMASTER: Agostino Gibaldi (UG)

|

|