Breaking news

[Back to archive]

The latest results achieved by the project consortium.

Reading out Binocular Energy Population Codes for Short-latency Disparity-vergence Eye Movements

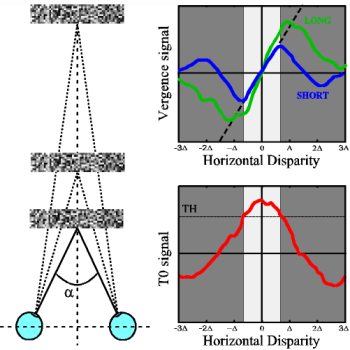

Fig 1: (left) Simulated experimental setup where the eyes verge on planes at different depth. (right) The LONG and SHORT signals used to drive vergence, and the T0 signal to switch between LONG and SHORT modes.

The ability of the human brain to fuse the

images projected on the left

and right retinas, in order to achieve depth perception, depends

directly on

the ability to produce and to keep a stable fixation, i.e. to act as

both the

eyes fixate the same point in the word. This task is carried out by

vergence eye movements (Fig.1 left).

Experimental

evidences show that, although depth perception and vergence eye movements

are

based on the activity of complex cells of the primary visual cortex, the brain adopts specific and separate mechanisms to combine binocular information and carry out the two distinct tasks. We define as fusibility range (FR) the range where a

distributed population of disparity detectors is able to fuse the left

and

right retinal images and hence to compute a disparity map. In each image location

the

decoding of the population can be achieved through a centre of mass

strategy

where each cell response is weighted by its preferred disparity, and

thus

taking a decision on the disparity values. Vergence control models that

are

based on a distributed population of disparity detectors, usually

require

first the computation of the disparity map, thus limiting the

functionality of

the vergence system inside the FR. The model we developed, mimicking

the behaviour of the cells in the Medial

Superior Temporal area [Takemura et al., Journal

of Neurophysiology, 85:2245-2266, 2001], combines the population

responses without

taking a decision, but

extracting a disparity-vergence response that allows us to nullify the

disparity in the fovea, even if the stimulus presented is far beyond

the FR. The

disparity-vergence response is obtained by a weighted sum of the

population

response, where the weights are computed by minimizing a functional

that embeds

two very specific goals: (1) to obtain signals proportional to

horizontal

disparities, (2) to make these signals be insensitive to the presence

of

vertical disparities. The desired feature of the horizontal disparity

tuning

curve for vergence is an odd symmetry with a linear segment passing

smoothly

through zero disparity, which defines a critical servo range over which

changes

in the stimulus horizontal disparity elicit roughly proportional

changes in the

amount of the horizontal vergence angle. On the basis of the Dual Mode

theory

[Hung et al., IEEE Trans. on Biomedical

Engineering, 33:1021-1028, 1986], the model provides two distinct

vergence

control mechanisms: a fast mode enabled in the presence of large

disparities,

and a slow mode enabled in the presence of small disparities (cfr LONG

and

SHORT signals in Fig.1 right). Moreover we extract an additive signal T0,

sensitive

to zero disparity, that automatically switches between LONG and SHORT,

depending on the disparities present in the scene.

The

model, tested in a simple virtual environment characterized by a

frontoparallel

plane with a random dot texture (Fig.1 left), produces the correct change

of

vergence for both a steady plane and a plane moving in depth, even when in the

scene are

present disparities larger than the FR. Thus, the model is able, first, to

bring back

the disparities of the scene inside the FR, in order to grant the

effectiveness

of the disparity map, and then, to keep the fixation point stable on the

surface

of the object in fovea.

A. Gibaldi, M. Chessa, A. Canessa, S.P. Sabatini, F.

Solari

Department of Biophysical and Electronic Engineering

(DIBE), University of Genoa

|